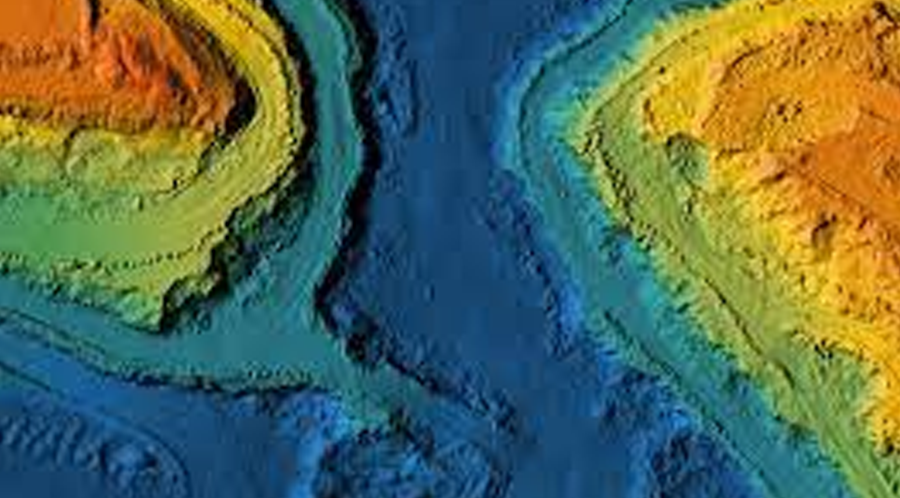

The international team of researchers could speed up the modelling of large geospatial datasets with benchmark accuracy when implemented on a computing system based on highly parallelized graphics processor units.

Computers can perform large calculations in a short time. But due to the constraint on the way the digital system stores data, calculation resulting in a longer sequence of digits will be curtailed. Accumulation of precision errors in geospatial datasets can lead to faulty modelling outcomes. The latest development in the field seems to be an elegant solution for this problem.

“For decades, modelling of environmental data relied on double-precision arithmetic to predict missing data,” says the team. “Today, there is high-performance computing hardware that can run single- and half-precision arithmetic with a speedup of 16 and 32 times compared with double-precision arithmetic. To take advantage of this, we propose a three-precision framework that can exploit the acceleration of lower precision while maintaining accuracy by using double-precision arithmetic for vital information.”

“The main goal of this project is to leverage the recent parallel linear algebra algorithms developed by KAUST’s Extreme Computing Research Centre to scale up geospatial statistics applications on leading-edge parallel architectures,” they added

The next step for the team will be integrating approximations with mixed precision to lessen memory footprint and decrease calculation time.